Channel explosion in sequencing

Death of the grid?

Every year is the same. You’ve picked out all the new pieces for your display, performed the power calculations, assembled all the new elements into a great design, know exactly how many control channels are being used and then it’s time to sit down and stare at – that big, empty Grid. You know what I’m talking about. It’s where the magic happens of linking the music to the lights. That magic is far from easy. You’ve got hundreds of control channels and points in time during the music where the lights change. That can translate to hundreds of thousands of little boxes to fill with on, off, fade, twinkle and shimmer commands to make your display come alive. You start perspiring because there’s no doubt with the new stuff in your display it’s going to take even more time than last year to dazzle the audience. There’s got to be a better way.

How did we get to this? Why are there so many control channels that now make sequencing so complex? One word: hardware. It has clearly started to eclipse software in complexity and outstripping the sequencing software’s ability to easily handle all this new functionality. Think about it. Currently on the market we have some hard to program devices such as the Light-O-Rama RGB (Red Green Blue) Color Cosmic Ribbon (CCR) and the D-Light RGB Firefli. Then there are the various RGB floods and wall washes as well as the emergence of inexpensive RGB light strings. Add complex DMX (Digital MultipleXing) devices such as moving head spot lights, lasers or RGB matrix panels and you really start to see an explosion in channel counts that make sequencing a nearly impossible feat with the tools we have available to us today.

The writing on the wall is clear: the Grid in its current form must be improved or replaced, but with what? The first clue is to look at how we create our displays. Most are built from elements such as mega-trees, mini-trees, arches, stars, snowflakes, lights in bushes, lights on fascia and lights along a sidewalk, along with countless other possibilities. We then combine these different elements to comprise a completed display. When we look a specific element such as a mega-tree, we see that at a high level it has individual properties such as the number of light strings around the tree and the number of different light colors available. These properties define the object. Remember, in this case the object is the entire mega-tree with all of its components.

In a small way we have the concept of objects and properties of objects today in the form of “tracks” or “virtual controllers” in our sequencing software. You create a track and then put all the individual control channels of a mega-tree into that track. Of course this is easy if you have eight channels of a single color in a mega-tree but it gets considerably more complex when you have a mega-tree with 16 channels and four possible colors making for a total of 64 channels. Now think about that tree spinning and changing colors during a really fast paced tune. It’s exciting to watch but a very time consuming process to sequence.

Imagine the near future when you have one mega-tree with 16 strands of 100 RGB light pixels and suddenly you’re confronted with 4,800 control channels. Now you have an almost impossible task to tackle with our current systems for sequencing. You’re head is already hurting. Where’s the aspirin?

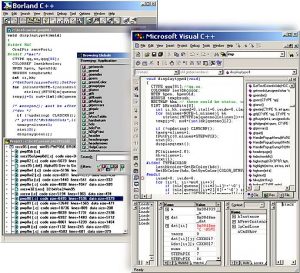

Let’s take a moment and look back at what occurred in the software industry over the past 15 years. We see some commonality between what issues they faced way back then and what we are facing with sequencing today. Back in the early 1990’s software was most often written line by line. If you wanted to make text scroll on the screen, you manually instructed the hardware to do exactly that which meant many lines of complicated computer code. This meant expensive programmers spent a lot of time working their magic just to perform repetitive “low level” functions. We generally do the same thing today in sequencing. If you want a “jumping” sequence on your arch you manually go through and click each box in the sequencing grid for each segment/channel in the arch with the effect (on, off, fade, etc.) you want.

Programmers started to view this constant rewriting of low level code to perform the most basic functions as a waste of time so they created something called “object oriented programming” or OOP. With this new technique a programmer could “drag” an object from a predefined group into their project, such as an input field object. Once the object was in the project, the programmer defined the object’s properties, such as what letters or numbers could be entered into the input field, if the field was to be verified or how many letters could be placed in the input field. The software then did all the work behind the scenes to put the code in place to make the object act the way the programmer designed. In the end this saved the programmer’s time so they could focus on the sophisticated high level programming instead of the boring low level details.

Taking the OOP concept in the software world to our sequencing world, it’s pretty safe to say that the future of sequencing will be “object oriented sequencing” or OOS. Here is the basic process that will take place when sequencing our displays:

The first step will be to create a three dimensional map or grid that defines our display. This map allows the sequencing software to know the physical location of all the display elements or objects in relation to each other. It is important for the software to know where groups of objects are in relation to each other, such as a row of mini-trees going from left to right in the front of your display. This map also serves to create the visualizer display used to preview your sequences.

The next step is defining all the individual objects that make-up your display on the 3-D map. You’ll drag predefined objects onto the map and be prompted to thoroughly define its properties. A sample might include:

Name/description

Power consumption

Height/width

Controller type, controller ID and/or channel(s)

Bulb direction (individual RGB pixels)

Number of separate colors used

Number of bulbs

Once all the individual objects have been placed on the 3-D map, grouping of individual objects will occur. An example would be to define individual mini-trees into a group of mini-trees or all the red lights in your display into one or more unique groups. This allows the group to act as one “cluster” object when an effect can be placed upon the object.

Now the sequencing software is setup to “understand” your display and the definition will not need to be re-created until your display changes next year. This new type of display definition is close to what might be called our channel map today.

You are finally ready to sequence your show. Along the side of your computer screen or Y axis will be each of the display objects you created in the prior steps. Next you add in the music or video that forms the standard timing marks you are familiar with along the top of your computer screen or X axis. You can continue to use tools like the beat wizard or VU wizard to generate timing marks or beat tracks.

On the sequencing screen will be a library of pre-defined effects. These may apply to a single type of object or a cluster depending on the object type. An example of an effect on a single object would be a “jump/wave” effect; you would drag and drop this jump/wave effect on an arch object and you would be prompted to define the properties of the effect being applied such as perform a left to right jump/wave with a smooth fading tail. Once properties are defined you simply move the effect on the object to the point in time where you want the effect to take place and extend the duration for as long as you want it to take place, such as during a slow section of the song or guitar lick.

These effects have the ability to be applied to groups of objects, also. If you wanted to make all the lights in your entire display roll from left to right transitioning in color from red to green: just drop the “wave” effect onto the grouping of all objects, define the color transition property, set the time period and you’re done. What would have been a 15 minute process in the old days is now done in just seconds.

Effects can also interact directly with the music. You might want the legs of an arch to appear as a VU meter, where each leg is “jumping” to the beat of the music, all without direct input on your part. As you put more adjacent effects onto the same object, the sequencing software will merge these effects together. As one effect ends the next effect smoothly transitions into place. You will be able to change the speed by simply overlapping these effects with no abrupt shifts from one effect to another. The effects library will be modular so other people can build new types of plug-in effects that do not come with the standard base product.

As you can see, the need to set individual fade, on, off, twinkle and other functions is for the most part no longer necessary in the OOS world as the “high level” sequencing has done this for you behind the scenes. For most people this will be more than sufficient. Under all that high level sequencing is still the basic on, off, twinkle, shimmer and fading. All these “low level” commands are just written by the high level sequencing. For those people that want to really fine tune a lighting effect they can still get down to the low level sequencing and change the individual commands just like in the old days.

This new form of high level sequencing will free us from the mundane tasks of clicking off those thousands of little boxes in the Grid and allow us to focus on some really great new elements coming onto the market. I can’t wait. Every day Christmas is one day closer. Let’s hurry up and get this done!

David Moore is owner of HolidayCoro.com which produces the CoroTree™, BabyBorder™ and other unique and time saving display elements. His upcoming 2010 display will contain nearly 500 channels and a gigantic sequencing grid he’s already dreading.

This article was included in the March 2010 issue of PlanetChristmas Magazine.

By David Moore